The Last Guardrail

To Govern AI, We Must First Restore The Balance Of Powers

There has been a lot of discussion about the Anthropic negotiations which ultimately fell apart when Anthropic was unwilling to accept certain requests by the Department of War. Negotiations had been ongoing for several months, and I am not taking sides, but for transparency’s sake I appreciate Anthropic’s focus on AI Safety at it’s core. This was not a planned post, but I wanted to share some observations that I believe this brings into sharp focus, and must not be ignored.

The Wrong Story

Two weeks ago, on Friday, February 27th, reports emerged of a confrontation between the U.S. Department of Defense and AI company Anthropic over the use of its Claude model in government systems. Within hours, the dispute escalated into an extraordinary step: federal agencies were directed to cease using Anthropic technology immediately, and Anthropic was classified as a supply-chain risk.

Most people saw the episode as a story about AI safety. Would Claude be used for mass surveillance? Could an AI company hold the line against the most powerful military in the world? Those are genuine questions. But they are not the most crucial question raised by the week’s events. What we actually saw was something else: a stress test of constitutional infrastructure.

Anthropic refused to relax certain safety guardrails on its Claude model before the U.S. Defense Secretary-imposed deadline. Later that same day, the President’s order changed the framework governing one of the most consequential technologies in human history. Not by legislation. Not by judicial review. By executive action, moving fast, with no meaningful check.

The AI safety question was answered poorly within days.

The constitutional question is still open.

The Vacuum

This didn’t happen in a vacuum, though it exploited one. I’ve written elsewhere about how the attention economy is eating democracy, hollowing out the deliberative capacity that foundational governance requires. That’s the backdrop here. Congress has not failed to govern AI because the problem is too hard. Legislating complex technology is always difficult. But today Congress operates inside an environment that makes sustained deliberation increasingly rare. The Pentagon didn’t exploit a legal loophole last week. It exploited that failure.

The Three Branches: A Case Study

If you want to understand how fragile our institutional infrastructure has become, consider the recent debate around the Epstein Files Transparency Act.

Last November, after months of debate, delays, and sustained public pressure, Congress passed legislation with the House voting 427-1 and the Senate approving it unanimously. The President then signed it into law; a clear example of separation of powers, with all three branches functioning as intended. Yet implementation stalled. Files were withheld. Deadlines passed. A law supported by nearly unanimous bipartisan votes was given mere lip service by the very executive branch sworn to enforce it.

This isn’t a partisan story. It’s not even mainly about Epstein. It’s a warning for democracy: when institutional independence weakens, the government continues to function but stops delivering meaningful results. If we can’t reliably implement and enforce a law passed with a 427-1 vote in the House and a unanimous vote in the Senate, why would we think an AI governance law would fare any better?

The Mechanism

Executive overreach is as old as the office itself. That alone is not the main point here. The real story is what happens when the usual friction (deliberation, dissent, and institutional pushback) is removed. A company was blacklisted by executive order on a Friday afternoon. The DOJ ignored the 427-1 law. Senators who break ranks face immediate, coordinated campaigns to label them as turncoats before they’ve even finished speaking.

The guardrails didn’t fail due to poor design. They failed because the conditions that allow them to work have been worn down to near ineffectiveness. Social media has not only contributed to this erosion but also made unilateral executive action faster and politically safer than at any time in American history. Executive decisions are announced, defended, and politically normalized before traditional oversight mechanisms can even convene.

This is a structural observation, not a partisan one, but it requires an honest addendum. Executive overreach is not new, and no administration has been innocent of it. What is new is this: we have moved from administrations that tested institutional limits to one that openly contests the legitimacy of those limits altogether. That is a qualitative shift, not merely a difference of degree.

AI governance would land in that environment. The question is whether the institutions required to enforce it will still be standing.

The Question

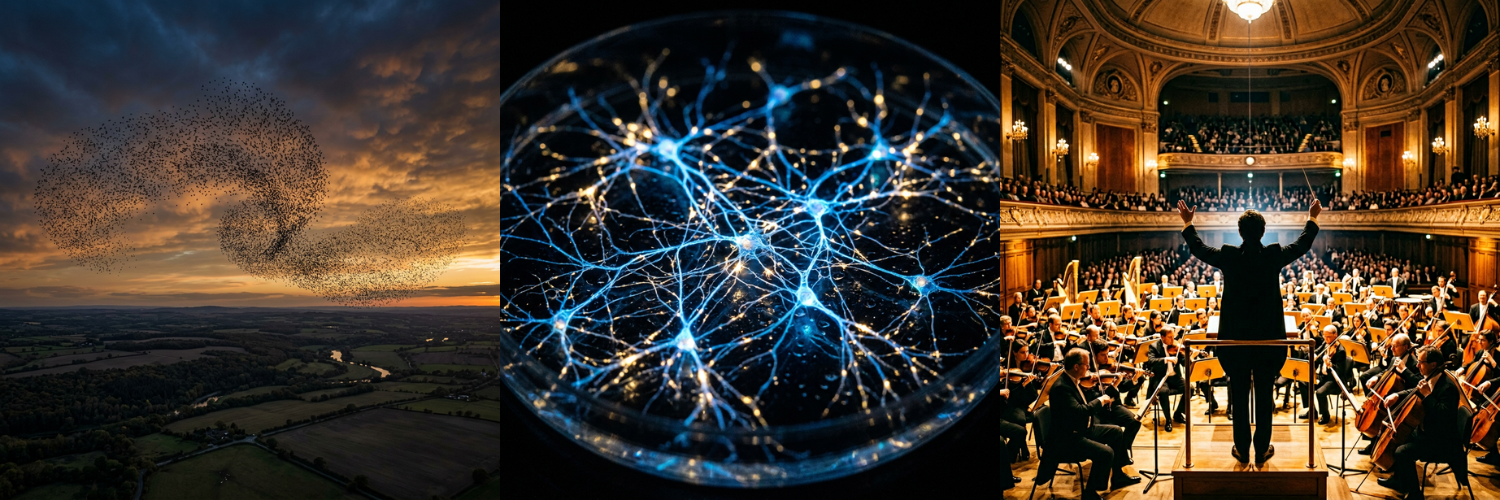

The three branches of government were not designed for AI. They were designed for something more fundamentally human: preventing any single actor from unilaterally rewriting the rules of power, regardless of how urgent their justification or how overwhelming their momentum.

That is exactly where we are.

The Founders understood that good intentions were never enough to prevent the concentration of power. Structures mattered. Constraints mattered. Independence mattered. The separation of powers wasn’t a bureaucratic inconvenience; it was the point.

Nobody in the AI debate is asking the right question. The argument is all about what to regulate, how to regulate it, who gets a seat at the table. But none of that matters if the machinery required to enforce any of it no longer reliably functions. You can write the clearest framework imaginable, and we should, but without institutional capacity to enforce it, you’ve produced more window dressing. We already have plenty of that.

The question isn’t what AI governance should look like. The question is whether we still have the institutional capacity to govern anything at all, and whether the electorate understands that this, not any particular technology, is the crisis that demands their attention.

That answer is still ours to write. But only if we choose to pick up the pen.

Originally published on Substack.