Swarm Intelligence

The Architecture of Emergence

I played Doom. Almost obsessively. For nearly two years (1995 to 1997), my colleagues and I would wrap up long days on a construction site in Connecticut and disappear into networked deathmatches for a couple of hours or more (please don’t judge). It was, in the best possible way, a complete waste of time, but we had a lot of fun, and you could hear the howls down the hallway.

So when I read last week that 200,000 biological neurons, brain cells grown in a dish, had learned to play Doom, something clicked that went beyond the science. This wasn’t a benchmark. This wasn’t a leaderboard result. This was biology, doing something we built for fun, on hardware that didn’t exist when I was playing it.

Keith Dear, writing at Cassi AI, captured the moment well: the Cortical Labs demo has the same feel as DeepMind going from Atari to Go, something dismissed as a parlour trick until it suddenly isn’t. What looked like a curiosity in 2020 is an engineering platform in 2026.

The question is what we build with it. And more importantly, how.

The Portfolio Case

Keith’s broader argument deserves serious attention. The UK, and any nation that can’t outcompute the United States or China on raw infrastructure, has no viable path that runs straight through LLM scaling. The compute gap is too large, the energy costs too high, and the infrastructure investment too slow. Doing things differently isn’t contrarian. It’s the only rational strategy.

The portfolio case is right: organoid computing, neuromorphic systems, whole-brain emulation, bio

compute. These aren’t science fiction anymore. Cortical Labs went from Pong in 2021 to Doom in 2025, the same arc DeepMind traced from Atari to AlphaGo, dismissed at every step until it wasn’t. The frontier is moving faster than policy, and the countries that treat alternative architectures as research curiosities rather than engineering priorities will regret it.

But a portfolio of substrates isn’t the same as a portfolio of architectural principles. And that distinction matters more than almost anything else in this conversation.

Swarms are Powerful. They Are Not Wise.

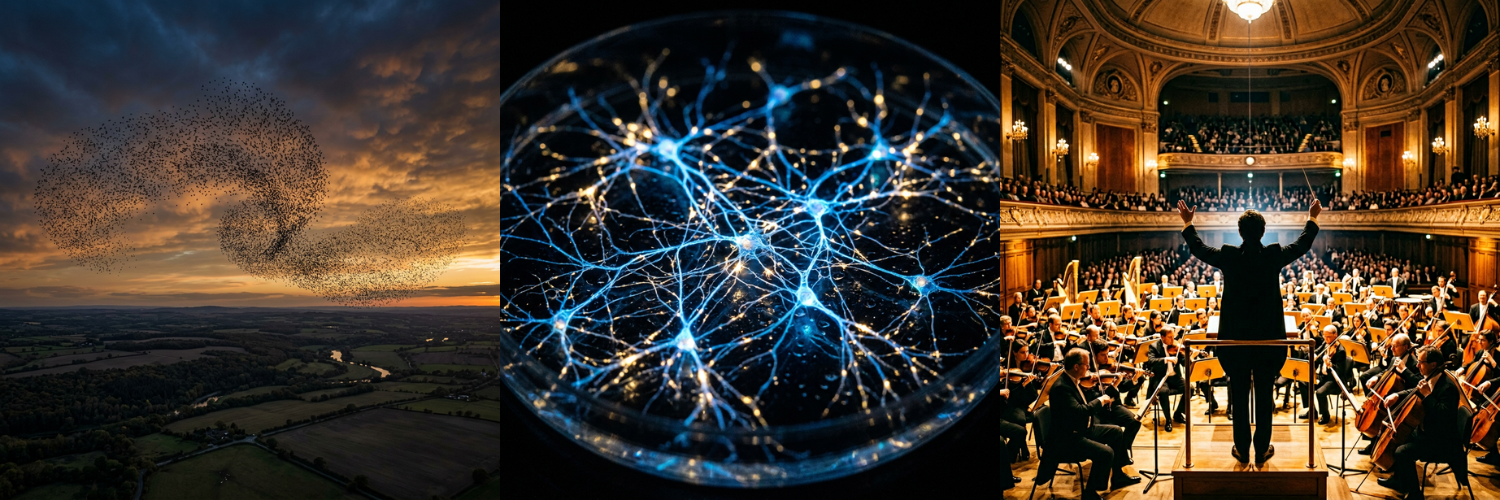

Swarm intelligence is one of nature’s most remarkable achievements. Ant colonies build sophisticated structures, wage wars, farm fungi, and solve optimization problems that no individual ant could comprehend. Fish schools evade predators with coordination that looks choreographed. Termites construct climate-controlled megastructures without a single architect on the payroll.

No individual agent understands any of it. Each follows simple local rules. Complexity, stunning, functional, apparently purposeful complexity, emerges from interaction at scale.

This is genuinely fascinating. But it’s ant-colony fascinating, not civilization fascinating. And that distinction is everything.

Robert Sapolsky, in Determined, uses swarm behavior to make a related point: what looks like intention is often just emergence. The appearance of design doesn’t require a designer. The appearance of intelligence doesn’t require judgment.

This is the frame I keep returning to when I watch the current excitement around multi-agent AI systems, networks of LLM agents interacting at scale, producing emergent behaviors their designers didn’t anticipate. Remarkable outputs. Apparent coordination. And in some cases, when left ungoverned, reputation systems are gamed, accounts are locked, and wallets are emptied. Not because the agents were malicious. Because emergence without executive function has no mechanism to catch when it’s optimizing for the wrong thing.

Swarms are powerful. They are not wise.

More Biological Doesn’t Mean More Governed

Here is where the biocompute excitement risks repeating a familiar mistake.

The dominant assumption of the LLM scaling era was seductive in its simplicity: more is better. More parameters, more data, more compute. Intelligence as a function of scale. We are now watching that assumption strain under its own weight, the critics Keith himself cites, the architectural limits that more silicon alone cannot solve.

But swap silicon for organoids and the assumption can quietly reassert itself. More biological substrate. More emergent complexity. More neurons in the dish. If the design philosophy doesn’t change, the substrate swap changes less than we hope.

The morphogenetics and cryptobiosis researchers are onto something real: let structure emerge from local rules, let dormancy preserve identity under stress. These are genuine biological insights with genuine engineering implications. But emergence still needs governance. A system that grows like biology, without the executive architecture that biology also evolved, isn’t more intelligent. It’s more complex. Those are not the same thing.

The history of AI is littered with approaches that produced impressive emergent behavior and mistook it for progress toward intelligence. Swarms, cellular automata, genetic algorithms, each genuinely fascinating, each genuinely limited by the same ceiling: no judgment, no executive function, no capacity to evaluate the system’s own outputs against anything beyond the immediate reward signal.

Being more biological doesn’t automatically mean being more governed. And ungoverned complexity, at scale, is not a step forward. It’s a faster way to get to the same dead end.

The Swarm Plus the Architecture

This is where Augmented Human Intelligence parts ways with both the scaling and swarm paradigms.

AHI is not an argument against emergence. It’s an argument about what governs it. The brain itself is a swarm of sorts, billions of neurons, no central controller, staggering complexity arising from local interactions. But evolution didn’t stop there. It layered executive function on top: the prefrontal cortex, the capacity for deliberation, the ability to override immediate impulse in service of longer-horizon judgment. The swarm plus the architect.

That is the design principle worth carrying forward. Not ‘can we build systems that grow like biology?’ but ‘can we build systems where human judgment remains the executive layer as they grow?’

The difference is not subtle. A system in which humans observe emergent behavior and occasionally intervene is not the same as one designed from the ground up to keep human attention in the governing role. The first relegates us to auditors of complexity we didn’t choose and can’t fully understand. The second treats human judgment as the irreplaceable ingredient it actually is.

Keith is right that Britain, and every nation serious about this, needs a portfolio of architectural bets. I’d add one more dimension to that portfolio: not just which substrates, but which governance architectures. Biocompute without that question answered is just a more biological version of the same problem.

The ant colony is extraordinary. But we didn’t build civilization by becoming better ants.

Awe Is Not a Design Principle

The Cortical Labs demo is genuinely awe-inspiring. Brains in a dish, playing Doom, accessible via the web. The whole-brain emulation work is, depending on your disposition, either thrilling or terrifying, probably both. The frontier is moving faster than most people’s mental models of what’s possible, and Keith is right that the countries and companies treating these developments as distant curiosities will regret it.

But awe is not a design principle.

The question worth sitting with isn’t whether AI can grow like biology. Biology has been solving hard problems for four billion years, and we should absolutely be paying attention. The question is whether we build systems that govern their own growth or assume that complexity, at sufficient scale, eventually produces wisdom.

History, biological and human, suggests it doesn’t. Evolution produced the prefrontal cortex because ungoverned emergence wasn’t enough. Civilization developed institutions because collective intelligence without executive architecture produces cascades rather than decisions. The swarm is powerful. The swarm plus the architect is transformative.

We are at a genuinely remarkable moment. Brains in dishes. Fly minds running in simulation. Intelligence emerging from substrates, we are only beginning to understand. The portfolio of bets is the right frame.

Just make sure governance is one of them.

Originally published on Substack.