After the Music Stops

Who Will Bear the Cost of the AI Correction

This is the third and final installment in a series examining the cracks in the AI scaling narrative, from the technical limits, to the financial fragility, to who bears the cost when the correction comes.

In the first piece in this series, I argued that the technical foundations of the AI scaling thesis are cracking. Turing Award winners and field pioneers are converging on a shared diagnosis: brute-force scaling of large language models won’t yield general intelligence, and something architecturally different is needed.

In the second, I followed that technical critique to its financial implications. The AI boom is structurally fragile: narrow market concentration, lopsided revenue, a 95% enterprise pilot failure rate, and $1.5 trillion in projected debt predicated on productivity gains that may never materialize.

This final piece asks the question that neither Silicon Valley nor Wall Street wants to answer: when the correction comes, who actually pays?

To be clear: AI is not worthless. Large language models have genuine productivity applications, and many of the underlying technical advances are real and durable. The question isn’t whether AI creates value. It’s whether current valuations, capital allocation, and policy assumptions reflect a realistic assessment of that value, or whether they require something closer to general intelligence to justify themselves. If the latter, and the first two pieces in this series argue that it is, then the cost of the gap between expectation and reality has to land somewhere.

Not on the investors. Not on the executives. The cost falls downstream, onto retirement accounts, communities, workers, and democratic institutions that are being quietly restructured around assumptions that may not hold.

It’s Not the Investors

The mythology of tech busts centers on investor losses. Pets.com, Theranos, FTX. The names change; the pattern doesn’t. Venture capital absorbs the hit and raises the next fund. Sequoia survived the dot-com crash. Andreessen Horowitz kept right on investing. The hyperscalers writing off AI infrastructure will take earnings hits, not existential ones.

The real exposure is further down the chain, in places most people don’t think to look.

Start with your retirement account. The Magnificent Seven now account for roughly a third of the S&P 500’s total market capitalization and were responsible for more than 40% of the index’s total return in 2025. That means virtually every 401(k) and public pension fund in America is making a concentrated bet on AI, whether the account holder knows it or not. As one strategic advisor put it, the danger isn’t just the Mag Seven falling; it’s that the rest of the index is too weak to pick up the slack when they do. A correction in these seven companies isn’t a venture capital problem. It’s a retirement security problem.

Then there are the communities. Cities and states across the country are competing to host AI data centers, offering tax incentives, infrastructure commitments, and rezoning in exchange for the promise of jobs and economic development. Just this week, Illinois Governor JB Pritzker announced a two-year suspension of data center tax incentives, citing concerns about energy grid strain, rising consumer electricity costs, and whether these facilities are “financially sustainable over time.” In Indiana, local officials denied permission for a proposed data center after community pushback. South Dakota is passing a “Data Center Bill of Rights for Citizens.” The pattern is familiar: massive public investment in facilities that consume enormous energy, employ remarkably few people, and could become stranded assets if AI demand doesn’t sustain current projections.

And then there’s the debt. JPMorgan’s projection of $1.5 trillion in AI-related bond issuances by 2030 is held by institutional investors, insurance companies, and pension funds. That debt isn’t inherently dangerous if it’s financing genuine productivity transformation. But if enterprise AI adoption continues to stall, with only 5% of pilots reaching production and 42% of initiatives being scrapped, then we’re left with bonds backed by phantom revenue. And the holders of that debt are often the same pension funds and insurance companies already overexposed to the Magnificent Seven through equity holdings. That’s a double exposure: the same retirement savers are concentrated in AI stocks and holding the bonds underwriting AI infrastructure. The 2008 parallel isn’t the technology; it’s the debt structure underneath it.

The Labor Gap

This is where the conversation gets personal, and where the cost becomes hardest to measure.

The public discourse on AI and jobs swings between two poles: “AI will take all the jobs” and “AI is just a tool.” Both are wrong. The reality is messier, more specific, and already underway.

Entry-level job postings in the United States have declined roughly 35% since January 2023, according to labor research firm Revelio Labs. That period also coincides with aggressive monetary tightening and a broader post-pandemic recalibration of labor demand, so AI isn’t the sole driver. But the structural pattern underneath the cyclical noise is clear. SignalFire found a 50% decline in new hires with less than one year of post-graduate experience at major tech companies between 2019 and 2024. Recent graduates aged 22 to 27 face unemployment rates near 5.8%, the highest since 2021, against an overall rate of 4.2%. This isn’t a future threat. It’s a present reality, largely invisible in headline employment data.

The mechanism is quiet and structural. Companies aren’t announcing mass layoffs; they’re simply not backfilling roles when people leave. Federal Reserve Chair Jerome Powell has described this as a “low-hiring, low-firing” equilibrium. It sounds stable. It’s not. It means the displacement is happening through absence rather than announcement, concentrated on the youngest and most vulnerable workers, eroding the bottom rungs of the career ladder without anyone noticing until the ladder is gone.

Customer service, content production, paralegal work, entry-level coding: specific categories are contracting. Not because AI fully replaces them, but because it reduces headcount enough to matter at scale. And there’s a perverse irony embedded in this dynamic. AI companies have a financial incentive to overstate displacement potential because it inflates their addressable market. The labor threat and the investment thesis are entangled. The same narrative that drives valuations also drives fear.

I know something about this firsthand. In September 2023, I was part of a reduction in force after 25 years in technology consulting. The industry I’d built a career in was restructuring, and AI was part of the reason. I had a choice: compete for a shrinking pool of traditional roles, or invest in understanding what was actually happening. I chose the latter, spending over a year researching the intersection of human cognition, AI architecture, and evolutionary biology. Not because I had a comfortable runway, but because I believed the only durable response to AI displacement was to understand the technology deeply enough to work with it rather than against it.

What struck me most during that period wasn’t the technology itself. It was the absence of any coherent framework to help people navigate the transition. The federal response, to this day, is plans about plans. The White House AI Action Plan, released in July 2025, proposes an “AI Workforce Research Hub” and “rapid retraining initiatives,” but these remain proposals, not funded programs. A mandated plan to reach one million new apprentices was due by late August 2025; as of this writing, no such plan has been published. The existing federal training infrastructure, primarily the Workforce Innovation and Opportunity Act, was designed for trade-related displacement and factory closures, not for the continuous, distributed, invisible erosion of knowledge work.

The geographic dimension compounds the problem. AI jobs concentrate in a handful of metros. Displacement is distributed broadly. It’s not hard to picture: a mid-sized city that lost its call center to automation, watched entry-level coding jobs evaporate through attrition, and offered tax incentives for a data center that consumed grid capacity, employed a few dozen people, and may not outlast its tax abatement. That city exists in every state. This mirrors the manufacturing hollowing-out pattern, except it moves faster and affects white-collar workers who assumed they were insulated.

The Governance Deficit

The economic consequences are serious. The political consequences may be worse.

Here’s what doesn’t get discussed enough: the infrastructure being built for the AI boom doesn’t disappear when valuations correct. After the dot-com bust, the fiber optic cables that bankrupt companies had laid were bought for pennies on the dollar and became the backbone of the modern internet. That was mostly benign. The AI equivalent is massive behavioral datasets, surveillance capabilities, and algorithmic decision-making systems. When the correction comes, these assets don’t evaporate. They get acquired at fire-sale prices by buyers whose purposes may have nothing to do with the productivity narrative that justified building them in the first place.

When populations lose economic leverage through job displacement while simultaneously living under systems designed to monitor and optimize them, the conditions for democratic governance weaken. This isn’t dystopian speculation. It’s the observable pattern in every context where economic marginalization and pervasive surveillance overlap. The question isn’t whether these tools will be misused; it’s whether the institutional safeguards exist to prevent it.

They largely don’t. The United States has no comprehensive federal AI law. The Trump administration revoked Biden-era AI safety requirements on its first day in office and has since signed an executive order directing the Attorney General to establish an AI Litigation Task Force to challenge state-level AI regulations deemed “inconsistent” with federal policy. Over 1,000 AI-related bills were introduced across states in 2025, reflecting genuine demand for oversight. The federal response has been to threaten litigation against the states attempting to fill the vacuum while offering nothing comparable in return.

The net effect is a moral hazard: the companies whose concentration of power most warrants oversight are shielded from local accountability, while the communities bearing the grid strain, the energy costs, and the employment shortfalls have no federal framework to fall back on.

This is where the augmentation framework from the first piece in this series becomes more than an architectural preference. Systems designed to augment human decision-making preserve human agency by design. They keep humans in the loop not as a nicety but as a structural feature. Systems designed to replace human decision-making don’t. The governance question isn’t just “how do we regulate AI?” It’s whether we’re building systems that are compatible with democratic self-governance at all.

The Concentration Problem

Whether the AI boom continues or corrects, the infrastructure is concentrating in very few hands. Microsoft, Google, Amazon, and a handful of others control the foundational compute layer. This is the equivalent of three companies owning the roads, the ports, and the electrical grid simultaneously.

The most promising counterforce is open-source AI. As I noted in the second piece, open-source models are closing the performance gap with closed-source leaders, and enterprises are increasingly drawn to smaller, specialized, fine-tuned models. But open-source AI depends on compute access, which circles back to the same hyperscalers. Distributed architecture requires distributed infrastructure. Without it, “open” is a label, not a reality.

The CHIPS Act and semiconductor export controls, discussed in the second piece, are framed as competitive tools against China, not as instruments for domestic market structure. The United States is subsidizing concentration while calling it competition. The alternative, public investment in open compute infrastructure, antitrust enforcement that addresses AI-specific concentration, data portability requirements that prevent lock-in, remains largely unexamined in policy circles.

Meanwhile, China isn’t pursuing a single scaling approach. It’s diversifying architecturally while maintaining state oversight of strategic technology. Europe can’t compete in the capital-intensive scaling race but could lead in alternative architectures and governance frameworks. The United States is currently locked into the scaling paradigm by the very corporate interests that benefit most from it.

What Augmentation Actually Requires

This series began with a technical argument: the scaling skeptics are right, and Augmented Human Intelligence offers a more promising architectural path than the brute-force pursuit of AGI. The second piece showed that the financial structure built on the AGI thesis is fragile and concentrated. This piece has argued that the human consequences of getting this wrong fall disproportionately on people who had no say in the bet.

Those three threads converge on a single point. AHI isn’t just a better technical framework. It’s the only path that keeps humans economically relevant, politically empowered, and socially integrated in an age of increasingly capable machines. But it won’t happen by default. The economic incentives, the capital flows, and the policy environment all currently favor concentration, replacement, and scale.

What augmentation actually requires is deliberate choice at every level. Architectural choices: modular, specialized, human-in-the-loop systems rather than monolithic models chasing general intelligence. Policy choices: antitrust enforcement, public compute infrastructure, workforce transition programs that match the scale of the disruption. Ownership choices: open-source development, distributed access, accountability structures that prevent the technology from concentrating power in the hands of those who build it.

None of these are inevitable. All of them are possible.

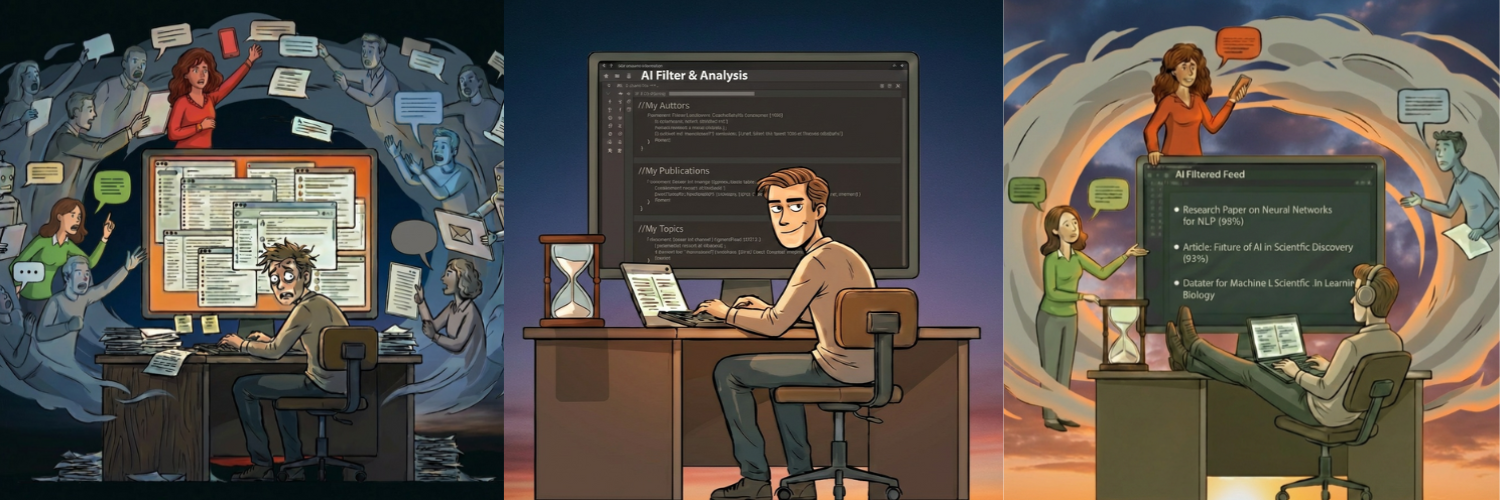

I mentioned earlier that I was part of a reduction in force in September 2023. What I didn’t say is where that led. Rather than compete for a shrinking pool of roles in an industry being restructured around me, I spent over a year building exactly the kind of human-AI collaboration I’ve been advocating for. A system called MARS (Multi-Agent Research System) that monitors AI-related content, scores it against my research corpus, and generates strategic engagement opportunities. This entire series, the research synthesis, the financial analysis, the policy arguments, was produced through that system. I used AI tools at every stage of retrieval, drafting, fact-checking, and revision. But the judgment, the framing, the argument, the decision about what matters and why: that’s mine. The AI handled pattern recognition and tireless iteration. I handled meaning-making.

I’m not offering a theoretical framework. I’m offering evidence. This is what augmentation looks like in practice, and it’s available to anyone willing to invest the time in understanding these tools deeply enough to direct them rather than be directed by them.

The orchestra can keep expanding. Or we can decide what music we actually want to hear, and who gets to play.

Originally published on Substack.